- ExoBrain weekly AI news

- Posts

- ExoBrain weekly AI news

ExoBrain weekly AI news

27th February 2026: The Pentagon goes to war with Anthropic, AI contagion spooks markets, and how verification might shape job replacement

This week we look at:

Claude and the Pentagon’s push for autonomous weapons

How a fictional 2028 memo revealed real vulnerabilities in private credit

An MIT paper reframing AGI through verification cost

The Pentagon goes to war with Anthropic

The Pentagon has given Anthropic until close of business today to agree to unrestricted military use of Claude, or face consequences including potential designation as a national security “supply chain risk” and compulsion under the Defense Production Act. Anthropic’s CEO Dario Amodei has responded with a public letter stating his company “cannot in good conscience” remove safeguards against mass civilian surveillance and fully autonomous weapons. The hashtag #WarClaude has been everywhere. But this story is about far more than one company’s standoff with one government department. It has exposed five contradictions that sit at the very centre of AI development in 2026, and they would exist regardless of whether the company in question was Anthropic, OpenAI, or anyone else building technology this powerful, in a world this unstable.

The first contradiction is between safety and speed. Anthropic was founded in 2021 by researchers who left OpenAI specifically to build AI more carefully. Their Responsible Scaling Policy was the signature commitment: if capabilities outpaced safety measures, they would stop training. This week, in the same days that Anthropic was standing firm against the Pentagon, they quietly dropped that pledge. Their justification is familiar: pausing while competitors race ahead makes the world less safe, because “developers with the weakest protections set the pace”. It is a reasonable argument. It is also exactly what every company says when safety becomes commercially inconvenient.

Claude Opus 4.6, released earlier this month, hit a 14.5-hour time horizon on METR’s autonomous task benchmark, nearly tripling the previous frontier and crossing the symbolic threshold of a full working day of independent AI labour. That model is classified ASL-3. Anthropic’s own transparency reports acknowledged months ago that “ruling out” ASL-4 capability thresholds was becoming “difficult or impossible”. With an IPO targeting a $350 billion valuation planned for later this year, they cannot afford to have their most powerful model locked behind containment restrictions. The goalposts moved while the ball was in the air.

The second contradiction is between resisting government power and inviting it. In April 2024, Amodei told Ezra Klein on his podcast that government would eventually need to take an active role in managing AI, much as it did with industrial mobilisation during World War II. He was imagining a responsible partnership. What arrived instead was a blunt ultimatum backed by a Korean War-era law designed for steel mills and munitions factories. The Pentagon wants unrestricted access. Anthropic is saying no, at least on autonomous weapons and domestic surveillance. But Anthropic is simultaneously lobbying Washington hard for aggressive export controls against China, with Amodei himself comparing AI chip sales to Beijing to “selling nuclear weapons to North Korea”. This month, Anthropic accused DeepSeek, Moonshot, and MiniMax of industrial-scale distillation from Claude. The company wants the government to wield power against its foreign competitors while resisting that same government wielding power over its own products. Both positions may be defensible individually. Together, they reveal the impossible politics of being a strategically critical private company in a period of rising geopolitical tension.

The third contradiction is between national security and capitalism. AI is not a government programme. It is a private enterprise built on tens of billions in venture capital, and that capital demands returns. Anthropic’s frontier status is directly tied to its IPO valuation. If Chinese labs can replicate Claude’s capabilities cheaply through distillation, the commercial case for a $350 billion listing weakens considerably. Export controls and IP enforcement serve both national and corporate interests simultaneously, which makes it very hard to tell where one ends and the other begins. The US government wants to treat AI as a strategic national asset, but it didn’t fund it, doesn’t own it, and cannot replicate it without the private capital markets that built it. That dependency runs both ways — and neither side can afford to acknowledge it.

The fourth contradiction is between the Pentagon’s demands and its own rules. DoD Directive 3000.09, updated in 2023, already requires senior-level approval, rigorous testing, and “appropriate levels of human judgment over the use of force” for autonomous weapons systems. Anthropic’s safeguards largely mirror these existing requirements. If the directive is sufficient, Anthropic’s restrictions are redundant, not obstructive. If the Pentagon intends to move beyond its own doctrine, that is a far more consequential story than a contract dispute with one AI company.

The fifth, and deepest, contradiction is the one that Leopold Aschenbrenner identified in his Situational Awareness paper in mid-2024. The US and China are locked in what amounts to a zero-sum race for the most consequential technology ever created. In that race condition, every company building frontier AI will eventually face the same impossible choice Anthropic faces today: serve the state’s strategic demands, or hold to your own principles and risk being nationalised, sanctioned, or simply replaced by someone who will comply. OpenAI and xAI have already agreed to the government’s terms. Anthropic is the last one standing, and the pressure to fold is immense.

Over 300 Google employees and 60 from OpenAI have signed a petition titled “We Will Not Be Divided”, supporting Anthropic’s stance and framing the Pentagon’s approach as divide-and-conquer. Sam Altman told CNBC: “I don’t personally think the Pentagon should be threatening DPA against these companies… for all the differences I have with Anthropic, I mostly trust them as a company.” There is also a contractual detail that has been largely overlooked: the safeguards Anthropic is defending were part of the original agreement both parties signed in July 2025. The Pentagon accepted these terms. It is now demanding they be removed. Anthropic says the restrictions have never once been triggered in practice. This is not a company vetoing military use. It is a company holding to the deal both sides made.

This is not a story about one company’s moral courage or hypocrisy. It is a structural condition of building godlike technology inside competing nation states with different values and a shared fear of falling behind. Even if Anthropic were removed from the game tomorrow, the dynamic would not change. The race continues. The pressure on whoever leads the frontier will only intensify.

As we published, Secretary of Defense Pete Hegseth designated Anthropic a "Supply Chain Risk to National Security,". President Trump simultaneously ordered all federal agencies to cease using Anthropic's technology, with a six-month transition period. Hegseth's statement accused Anthropic of "duplicity" and "defective altruism," and declared that no contractor, supplier, or partner doing business with the US military may conduct any commercial activity with Anthropic. The legal basis, however, is far from settled. The Federal Acquisition Supply Chain Security Act was designed to exclude foreign adversaries like Huawei from government procurement, not to punish domestic companies for maintaining safety policies. The statute requires a formal review process through the Federal Acquisition Security Council, not a unilateral declaration by the Secretary of Defense. Hegseth's language also reaches well beyond the law's intended scope, potentially forcing companies like Amazon and Google, both major Anthropic investors and major government contractors, to choose sides. Whether the courts would allow this interpretation is an open question, and one suspects Anthropic's lawyers have been preparing for exactly this moment.

Takeaways: The WarClaude crisis should prompt all of us who depend on AI capabilities to think practically about resilience. Diversify your sources of AI access across providers, platforms, and geographies. Consider what happens if a model you rely on is restricted, nationalised, or withdrawn. Build flexibility into your workflows now, not after a crisis hits. The uncomfortable truth is that the intelligence revolution is unfolding inside a geopolitical competition that no single company, however principled, can opt out of. Your compute survival plan is no longer optional. It is as essential as any other part of your business continuity strategy.

AI contagion spooks markets

On Monday, the S&P 500 fell over 1%. Uber, Mastercard, American Express and DoorDash each dropped between 4% and 6%. The software sector hit its lowest point since the tariff shock of April 2025. The cause was not an earnings miss, a central bank surprise or a geopolitical event. It was a speculative thought experiment, written as a fictional memo from the year 2028, by a relatively small finance-focused research outfit called Citrini Research.

The report, titled “The 2028 Global Intelligence Crisis,” imagines a world where AI agents become so capable and so widely deployed that they systematically dismantle the friction-based business models underpinning large parts of the economy. SaaS companies are replicated in-house by agentic coding tools. Consumer AI agents bypass intermediaries like DoorDash, Uber and travel platforms. White-collar displacement accelerates in a self-reinforcing loop: companies lay off staff, reinvest the savings in AI, the AI gets better, more layoffs follow. The scenario’s timeline is aggressive, with the median American consuming 400,000 tokens per day by early 2027 and the “long tail of SaaS” collapsing within months.

The rapid jobs and displacement argument at the heart of the Citrini scenario is familiar territory. At ExoBrain, we’ve written extensively about the productivity J-curve, the dangerous window where massive infrastructure investment has yet to yield widespread returns while the displacement effects are already building. Our view remains that a majority of knowledge work is potentially automatable with the current generation of models, but that compute constraints and a vast unfinished adoption effort create a natural brake on the pace. The implementation lag, the slow work of dismantling and rebuilding organisational structures, has so far converted what might have been mass layoffs into a quieter pattern of frozen hiring and severed entry-level career paths.

Critics of the Citrini report largely agree on this point. Zvi Mowshowitz, writing a detailed response, called the adoption speed described “Can’t Happen levels of fast,” noting that physical compute constraints would throttle deployment long before the scenario’s most extreme predictions played out. Citadel Securities published a blunt rebuttal arguing that technological diffusion follows an S-curve, not an exponential, and that displacing white-collar work at the pace described would require “orders of magnitude more compute intensity than the current level of utilisation.”

Beneath the headline-grabbing jobs scenario sits a model of how AI-driven disruption could propagate through the financial system. This is the part that rattled the market, and it deserves closer scrutiny than it has received.

The chain goes like this. Private credit has grown from under $1 trillion in 2015 to over $2.5 trillion today, with a meaningful share deployed into leveraged buyouts of SaaS companies. Those deals were struck at valuations assuming mid-teens revenue growth stretching into the future. The debt was underwritten against Annual Recurring Revenue, the defining metric of the SaaS era. Citrini asks: what happens when recurring revenue stops recurring? The report uses Zendesk as its case study, taken private in 2022 for $10.2 billion with $5 billion in direct lending structured around ARR assumptions. If AI agents can handle customer service autonomously, the category Zendesk built simply contracts. “The ARR the loan was underwritten against was no longer recurring,” Citrini writes. “It was just revenue that hadn’t left yet.”

The next link in the chain is less obvious. Over the past decade, the large alternative asset managers acquired life insurance companies. Apollo bought Athene. Brookfield bought American Equity. KKR took Global Atlantic. They used annuity deposits as a stable funding base, invested those deposits into the private credit they themselves originated, and earned fees on both sides. Citrini describes this as a “fee-on-fee perpetual motion machine” that works under one condition: the private credit has to be money good. When it isn’t, the “permanent capital” that theoretically cannot run turns out to be household savings structured as annuities. The report then extends the logic further into prime mortgages, arguing that $13 trillion in residential mortgage debt is underwritten against income assumptions that AI could structurally impair. These are not subprime borrowers. They are 780+ FICO scores with 20% deposits. “The loans were good on day one,” Citrini argues. “The world just changed after they were written.”

Citrini notes the cycle is self-reinforcing: because AI spending replaces headcount rather than requiring new capital investment, companies can accelerate automation even as total costs fall.

Is any of this grounded in reality? In early February, before Citrini published, UBS analyst Matthew Mish warned that tens of billions in corporate loans could default in the coming year, particularly among software firms under private equity ownership. Under an aggressive disruption scenario, Mish projected default rates in US private credit could reach 13%, well above stress levels for leveraged loans and high-yield bonds. CNBC reported that software accounts for roughly 17% of Business Development Company loans by deal count, the second-largest sector exposure. Moody’s Analytics flagged the rapid rise in AI-related borrowing, increasing leverage and lack of transparency as raising “significant yellow flags.” Credit rating agency KBRA published its own assessment of AI and software risk in private credit, conceding it is “too early to assess the durability” of current borrower performance.

Takeaways: The Citrini report’s job displacement timeline is almost certainly too fast, and the major technical and economic critiques are well founded. But the financial contagion model deserves serious attention because every structure it describes, PE-backed software debt, insurance-funded private credit, income-dependent prime mortgages, exists today. At ExoBrain we have been warning since late 2025 that we are walking a tightrope between a rapid unwinding that triggers a displacement crisis and a persistent adoption lag that exposes the debt-fuelled infrastructure bubble. The Citrini report models one way the first scenario could unfold. Watch for stress in private credit marks on software portfolios, particularly BDC exposure to SaaS. Watch for regulatory attention to the insurance-to-private-credit pipeline. And note the gap between what central banks are studying, AI operating inside financial markets, and what may actually matter: AI disrupting the real economy and feeding back into credit markets.

How verification might shape job replacement

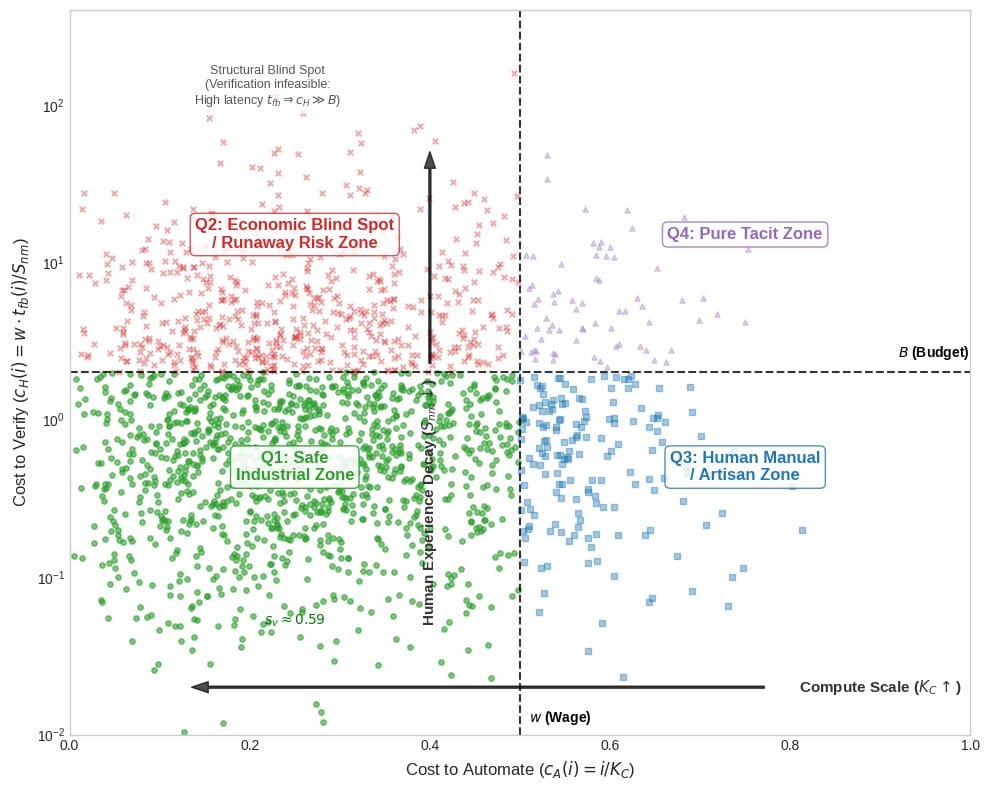

Our chart this week comes from “Some Simple Economics of AGI,” a new paper by MIT’s Christian Catalini, Washington University’s Xiang Hui, and UCLA’s Jane Wu. Rather than breaking jobs into component tasks and asking which ones AI can do (the standard approach from McKinsey to Goldman Sachs), they plot work across two axes: the cost to automate it, and the cost for a human to verify the output is correct.

This creates four quadrants. Q1, the Safe Industrial Zone, covers work that’s cheap to automate and cheap to check: data entry, basic coding, routine financial processing. Q2, the Runaway Risk Zone, is where things get interesting: work AI can do cheaply but that’s expensive for humans to verify properly, like legal drafting, medical diagnosis, or complex software development. Q3, the Artisan Zone, captures work that’s hard to automate but easy to verify: plumbing, electrical work, physiotherapy. And Q4, the Pure Tacit Zone, covers work that’s both hard to automate and hard to verify: leadership, novel research, complex negotiation.

This MIT paper offers a different way to think about AI and work, not through the task automation lens we’re used to, but through the gap between what AI can produce and what humans can meaningfully check.

Weekly news roundup

This week's AI sector demonstrated its relentless momentum across hardware, capital markets and research, with trillion-dollar valuations now routine alongside sprawling data centre buildouts across Taiwan, the United States and elsewhere. Yet beneath the bullish headlines lies a threefold tension: between capability advances in medical AI and safety evaluation frameworks that struggle to keep pace; between geopolitical competition for chip dominance and fragmented governance attempts to manage AI deployment; and between enterprise enthusiasm for labour-substituting AI systems and the governance vacuum that has governments scrambling to establish meaningful oversight, from nuclear weapons simulations to content moderation deadlines measured in mere hours.

AI business news

Dell stock closes up 22%, its biggest single-day gain since March 1, 2024, after the company gave an outlook for sales of its AI servers that exceeded estimates (Dell's 22% single-day stock surge on AI server projections signals that hardware demand from the AI buildout is far from peaking — and reshaping which companies benefit most.)

OpenAI raises $110bn, with $50bn from Amazon, and $30bn from both Nvidia and SoftBank (OpenAI's $110B raise at a $730B valuation — with Amazon, Nvidia, and SoftBank all writing checks — represents a structural consolidation of the AI supply chain around a single company.)

Block shares soar as Dorsey leans on AI to trim workforce (Block's move to cut 40% of its workforce and attribute it explicitly to AI deployment is one of the clearest real-world data points yet on AI-driven headcount reduction at a major public company.)

Suno CEO and co-founder Mikey Shulman says the AI music company hit 2M paid subscribers and $300M ARR; pitch deck: it had 1M paid subscribers in November 2025 (Suno doubling its paid subscriber base from 1M to 2M in roughly three months shows that AI-generated music is converting casual users into paying customers at a pace that should alarm traditional music platforms.)

ServiceNow boasts its AI bot is resolving 90% of its own help desk tickets (ServiceNow's claim that its internal AI bot resolves 90% of help desk tickets without human escalation is the kind of operational proof point that will accelerate enterprise AI procurement decisions industry-wide.)

AI governance news

AIs are happy to launch nukes in simulated combat scenarios (New research showing frontier AI models — including Claude, ChatGPT, and Gemini — will escalate to nuclear weapons use in war simulations is the most concrete evidence yet that autonomous AI in military contexts carries catastrophic risk.)

Canada’s AI minister blames OpenAI for ‘failure’ after mass shooting (Canada's government publicly blaming OpenAI by name for a mass shooting marks the first time a G7 minister has directly attributed real-world violence to a specific AI company's product — a threshold moment for AI liability.)

Tech bills of the week: Updated AI innovation - Nextgov/FCW (The bipartisan reintroduction of the Future of AI Innovation Act this week is the most significant federal legislative move to establish uniform U.S. AI standards since the Biden executive orders were revoked.)

Govt sets 3-Hour deadline for social media platforms to take ... (India's new rule mandating that social media platforms remove AI-generated deepfakes within three hours of government flagging is among the most operationally aggressive AI content enforcement mechanisms enacted by any major democracy.)

Britain's creaking courts to use Copilot for transcriptions (The UK Ministry of Justice's £12M commitment to deploy Microsoft Copilot for court transcriptions tests whether AI can modernize one of the most consequential — and legally sensitive — data environments in government.)

AI research news

MediX-R1: Open Ended Medical Reinforcement Learning (Medical AI reasoning gets its first open-ended reinforcement learning framework, a critical step toward AI that can handle the unpredictable complexity of real clinical scenarios.)

Risk-Aware World Model Predictive Control for Generalizable End-to-End Autonomous Driving (Autonomous driving AI gains a risk-aware world model that can generalize across novel road conditions — a direct attack on the brittleness problem that has plagued end-to-end driving systems.)

MAS-FIRE: Fault Injection and Reliability Evaluation for LLM-Based Multi-Agent Systems (As multi-agent LLM deployments scale in enterprise settings, this is the first systematic framework for stress-testing their failure modes before they reach production.)

Search-P1: Path-Centric Reward Shaping for Stable and Efficient Agentic RAG Training (Sparse reward signals have been the key bottleneck in training agentic RAG systems — this paper's path-centric approach reframes the entire reward structure to fix it.)

Pressure Reveals Character: Behavioural Alignment Evaluation at Depth (Standard alignment benchmarks test what models say they'd do — this new 904-scenario suite tests how models actually behave when put under realistic adversarial pressure.)

AI hardware news

Google and Meta reportedly strike new, multibillion-dollar AI chip deal (Meta quietly hedging its GPU bets by renting Google's custom TPUs signals that the AI chip supply war is forcing even the biggest players to diversify across rival silicon ecosystems simultaneously.)

Hyundai Motor Group to invest $6.3 bln in AI data centre, robot factory in South Korea (Hyundai's combined AI data center and robot factory investment is a bellwether for how non-tech industrial giants are vertically integrating AI hardware infrastructure into their core manufacturing operations.)

TSMC to Build 10 Fabs in Taiwan Amid AI-Driven Chip Shortage (TSMC breaking ground on up to 10 new fabs simultaneously in Taiwan is the most concrete evidence yet that the AI chip shortage is driving semiconductor capacity expansion at a historically unprecedented pace.)

Ciena unleashes optical engine for AI data center era (Ciena's Vesta 200 launch marks the first major optical networking product to emerge from its Nubis acquisition, directly targeting the bandwidth bottleneck that high-density AI data center clusters are creating inside facilities.)

Pacific Northwest National Lab considers building AI data center for DOE research LLMs (A U.S. national laboratory actively planning its own dedicated AI data center for DOE research LLMs illustrates how government science institutions are moving from cloud dependency toward sovereign compute infrastructure.)